Short Bio

Donny (Yuedong) Chen is a Research Scientist at ByteDance Seed (Singapore), developing 3D and 4D foundation models. ALL VIEWS ARE SOLELY HIS OWN (DISCLAIMER).

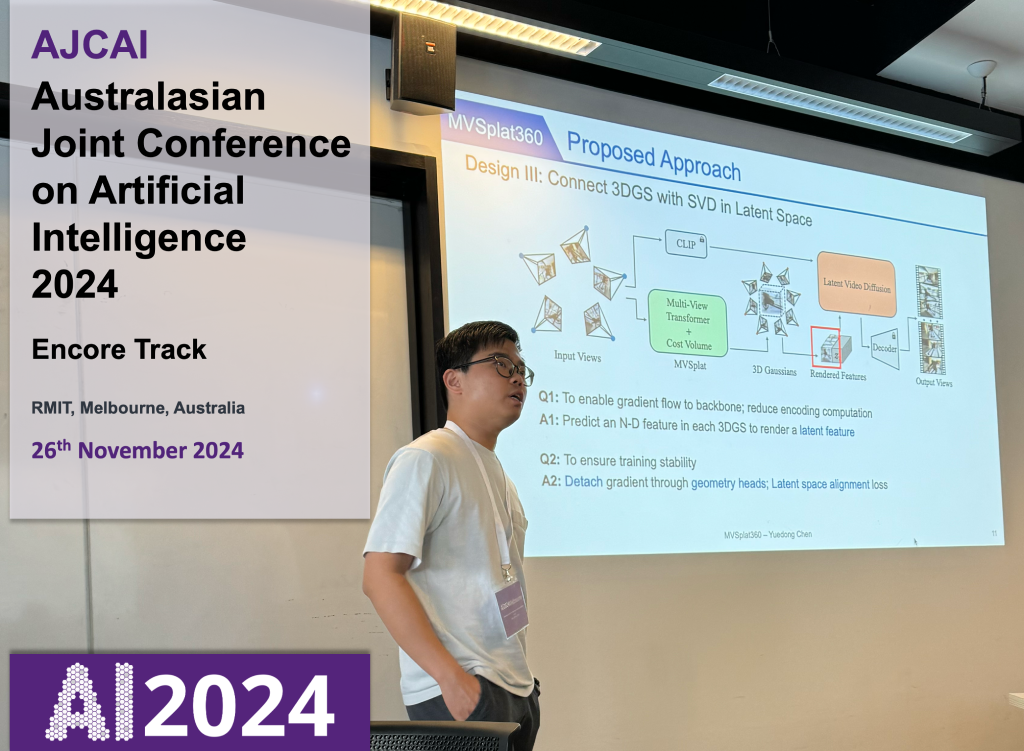

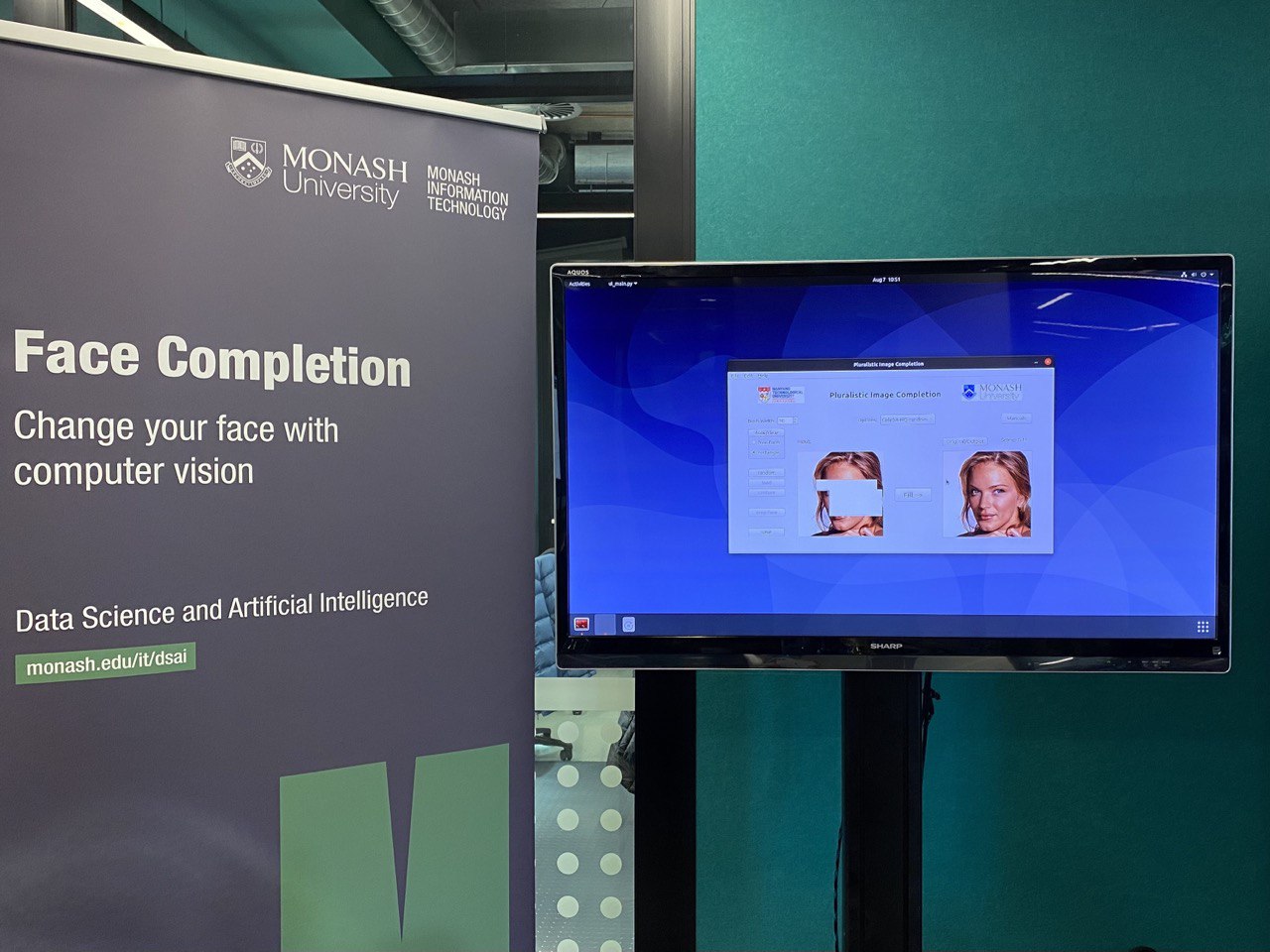

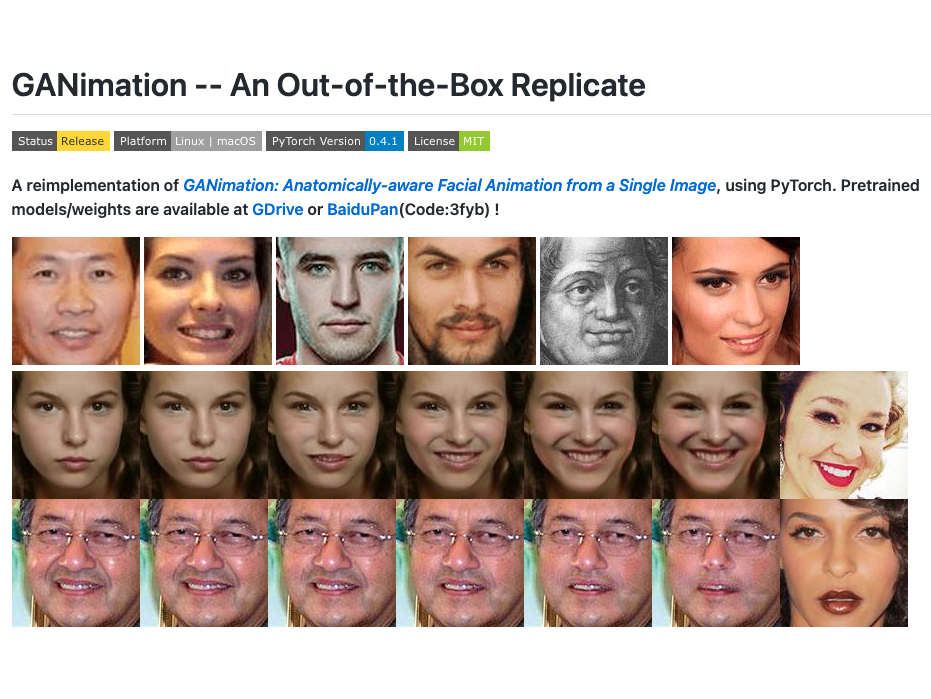

Previously, Donny obtained his PhD degree at Monash University (Australia) under the supervision of Prof. Jianfei Cai, Prof. Tat-Jen Cham and Dr. Bohan Zhuang. His PhD research focused on Feed-Forward Novel View Synthesis from Sparse Observations. During his PhD, he also collaborated with Haofei Xu, Prof. Marc Pollefeys, Prof. Andreas Geiger, Dr. Chuanxia Zheng, Prof. Andrea Vedaldi and Dr. Qianyi Wu.

Before that, he was a Research Assistant at the Institute for Media Innovation, NTU (Singapore). During this period, his research focused on enhancing emotion recognition by incorporating human prior knowledge.

His academic journey began with the completion of both his MEng and BEng degrees at Sun Yat-sen University, where he majored in Software Engineering. Additionally, he spent a semester as an exchange student at National Chi Nan University (Taiwan) during his BEng studies, collaborating closely with David Cheng.

Selected Publications

🤖🧠👌🏼 He prefers simple yet effective solutions

* indicates Equal Contribution; † indicates Project Lead

] [project page] [demo] [gallery] [news] [Spaces of the Week #4] [Paper of the Day #2]

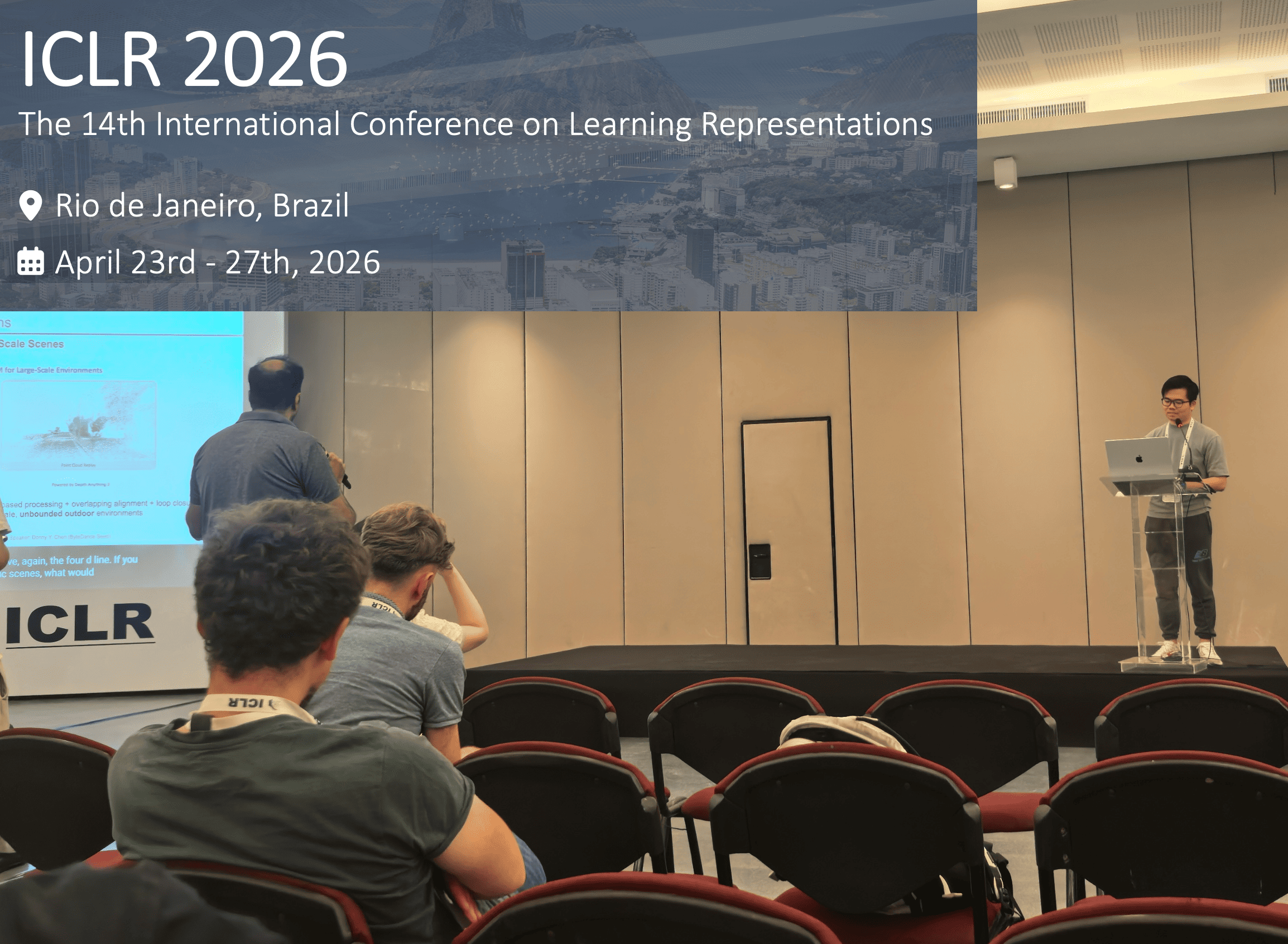

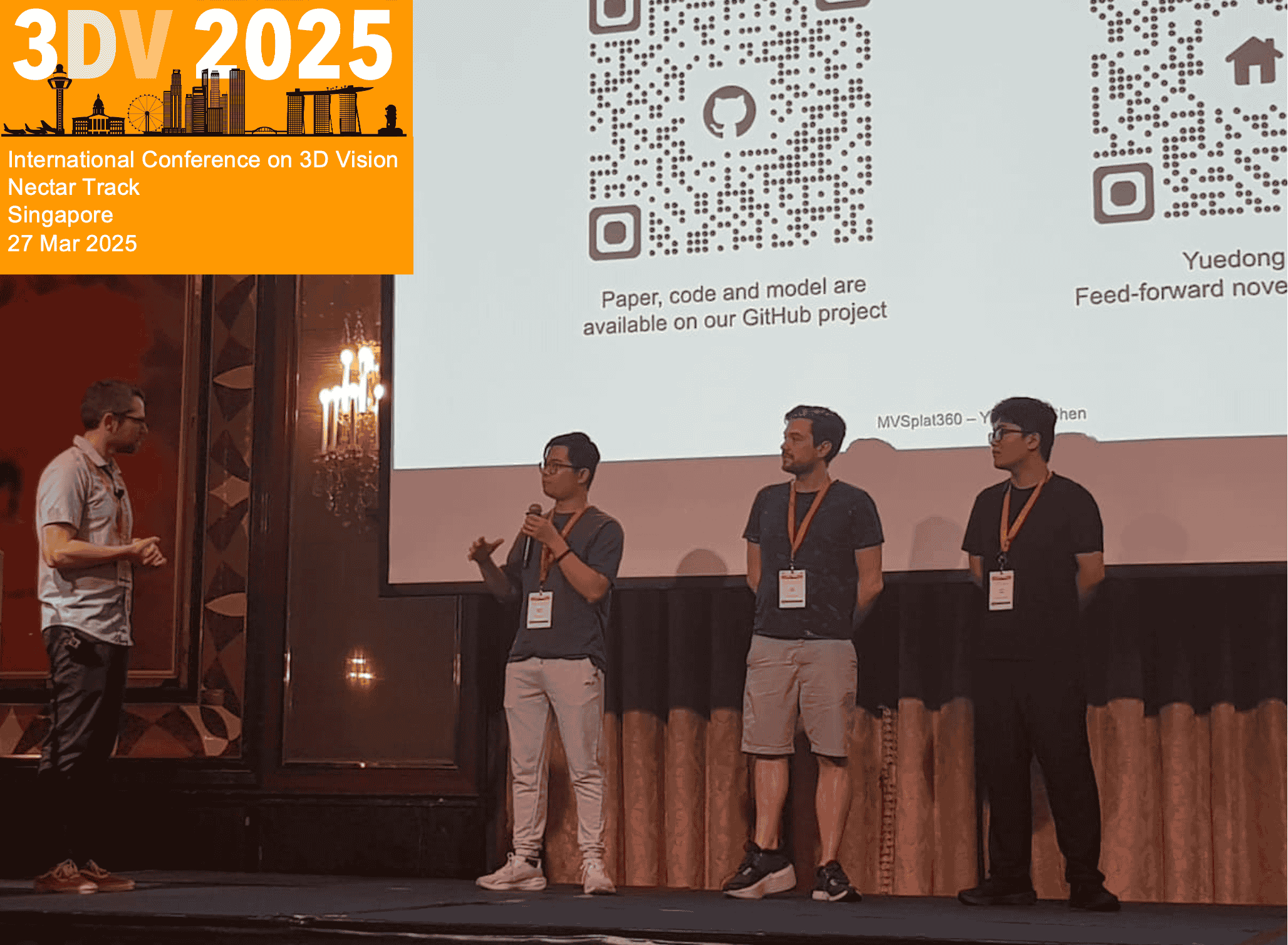

] [project page] [demo] [gallery] [news] [Spaces of the Week #4] [Paper of the Day #2] [also presented at ICLR 2026 Oral (recording, slides)]

More on Google Scholar

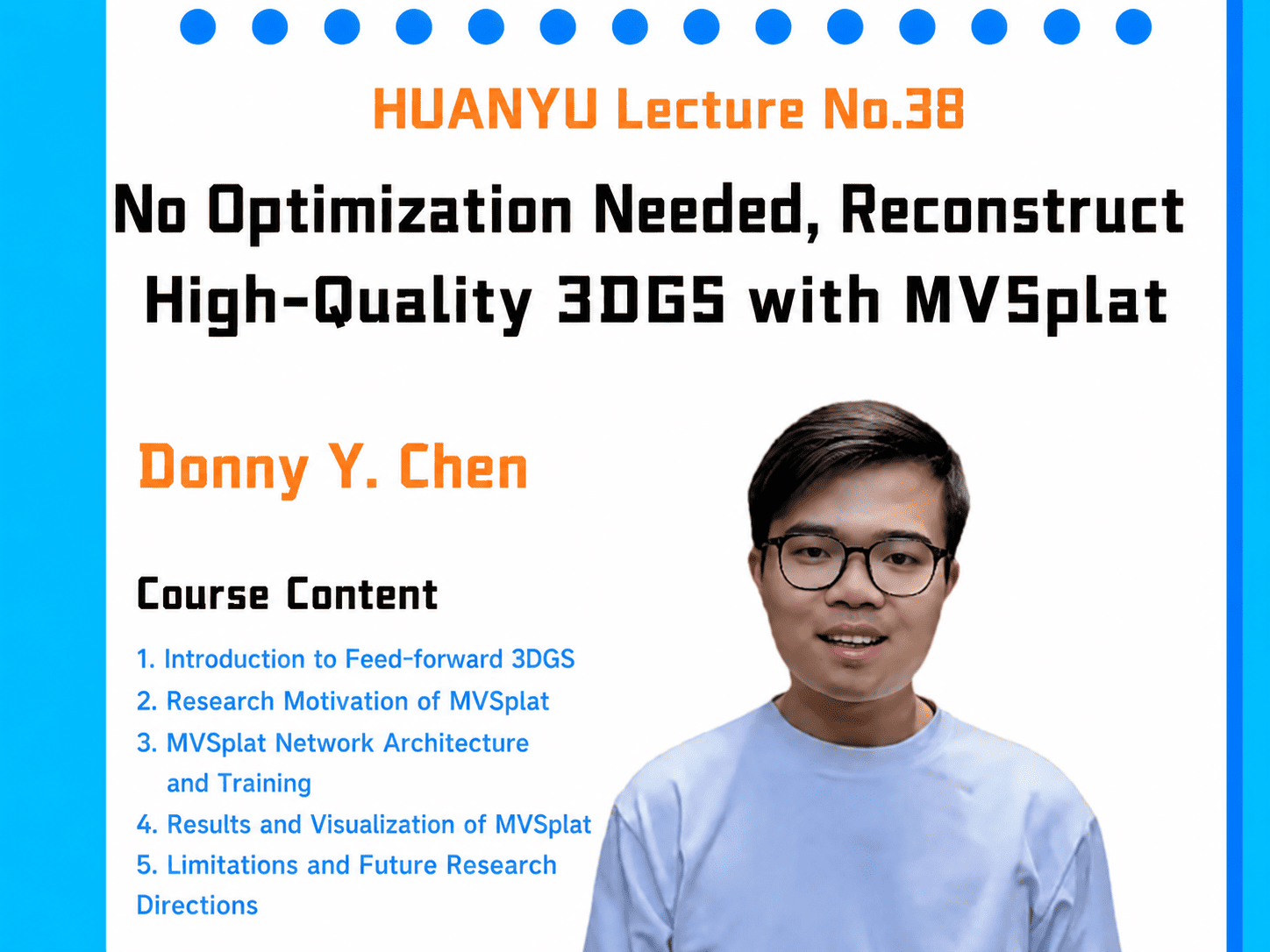

Projects & Talks

- 28-01-2025, Invited talk "Feed-forward NVS from Sparse Inputs" at Amazon, Tel Aviv, hosted by Lior Fritz.

- 08-11-2024, Invited talk "Feed-forward Novel View Synthesis" at Wayve, London, hosted by Joe Polin.

Miscellanies

- Conference Reviewer: ECCV(‘24-‘26), CVPR(‘23-‘26), ICCV(‘23-‘25), NeurIPS(‘24-‘25), ICLR(‘25-‘26), ICML(‘25), 3DV(‘24-‘26), AAAI(‘24-‘26), ACMMM(‘21‑’24), ACCV(‘24), ISMAR(‘23-‘24), IEEEVR(‘24)

- Journal Reviewer: TPAMI, IJCV, TIP, TVCG, TMM, TCSVT, TOMM, TVCJ, Computers & Graphics, The Visual Computer

- Donny is a native speaker of Teochew, fluent in English, Cantonese, Mandarin, and also familiar with Singlish.

- You are welcome to use this personal homepage as a template for your own. See the documentation for setup notes.

] [

] [